AI agents are already creating real business results. The challenge today isn’t whether AI works — it’s proving where the value came from.

That’s the AI attribution problem. When costs drop or speed improves, founders ask: Was it the AI? The team? Or a process change? Without clarity, AI value stays invisible.

1. Why AI Value Stays Invisible

Most companies implement AI as a "black box." They see an improvement in the bottom line, but they can't tie it back to a specific AI decision. This lack of visibility leads to skepticism from stakeholders and makes it difficult to justify further investment.

The AI attribution problem occurs when the impact of artificial intelligence is subsumed by general operational improvements. To truly understand the ROI, you must be able to isolate the AI's contribution from human effort and standard software automation.

"Without data, you're just another person with an opinion. With AI, without attribution, you're just an optimizer with a ghost."

— TechStream Strategy Team2. Real-World Success: DHL and Unilever

Companies like DHL and Unilever aren't just "experimenting" with AI. They have solved the AI attribution problem by tying every AI decision to a specific business outcome.

Supply Chain Excellence: The DHL Story

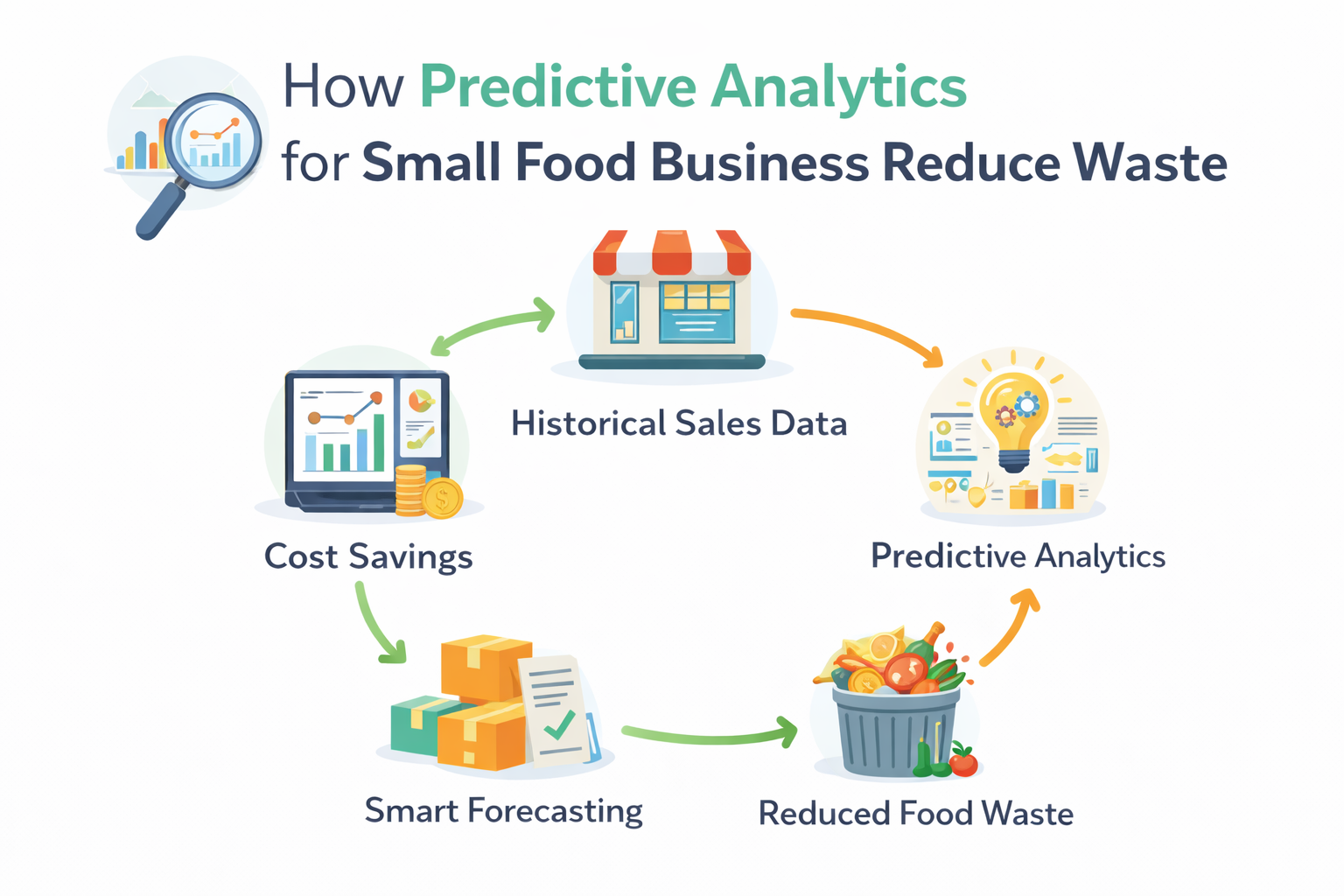

DHL uses AI-driven forecasting to manage inventory. By predicting demand more accurately, they achieved a 20–25% reduction in inventory levels. They didn't just automate alerts; they gave AI the authority to make decisions that were previously handled by manual spreadsheets.

Consumer Goods: Unilever's Forecasting Edge

Unilever applied AI agents to demand forecasting, resulting in a ~30% improvement in forecast accuracy. This led to lower waste and better on-shelf availability. Value was clear because outcomes were measured before and after AI adoption.

3. Attribution in Action: More Industry Examples

The AI attribution problem isn't limited to supply chains. Here is how other industries are proving value:

Example A: Customer Support (SaaS)

The Metric: Resolution Speed vs. Human Cost.

The Attribution: An AI agent was tasked with "level 1" technical queries. By tracking the percentage of queries resolved without human handoff, the company proved a 40% reduction in support costs while maintaining a 4.8/5 CSAT score.

Example B: Sales Outreach (E-commerce)

The Metric: Lead-to-Meeting Conversion Rate.

The Attribution: Instead of humans manually vetting leads, an AI agent scored and personalized the first three touchpoints. The result was a 3x increase in qualified meetings. Attribution was clear because the "AI-touched" cohort was compared against a "Human-only" control group.

Example C: Talent Acquisition (Enterprise)

The Metric: Time-to-Interview & Quality of Hire.

The Attribution: An AI agent screened high-volume applications for technical fit. By tracking the "success rate" of AI-screened candidates in final rounds, the HR team proved that AI reduced screening time by 75% while slightly increasing the offer acceptance rate.

Comparison: Before vs. After AI Attribution

| Area | Before AI (Manual) | After AI (Attributed) |

|---|---|---|

| Planning Cycle | 7-10 Days | < 1 Hour |

| Decision Logic | Intuition/Experience | Data-Driven/Transparent |

| Error Margin | 15-20% | < 5% |

4. A Founder’s 4-Step ROI Framework

To measure AI ROI effectively, you must stop treating AI as a "software cost" and start treating it as a "virtual employee cost." Here is the framework for success:

- Define One Business Metric Per Use Case: Specificity is mandatory. If you use AI for support, measure "cost per resolved ticket," not "number of tickets handled."

- Track Decisions, Not Just Outputs: An AI writing 100 emails is an output. An AI deciding who to email based on lead score is a decision. Decisions are where the value lies.

- Compare Before vs. After: Record 30 days of manual data before deploying an agent. The "delta" in performance is your proof.

- Keep Humans Accountable: Humans own the outcomes; AI drives the speed. This ensures the system is constantly tuned for maximum ROI.

5. Beyond Vanity Metrics

Why do so many AI projects get cancelled? Usually because the impact was explained to leadership in technical terms like "token count" or "latency." Winners use the "So What?" test reached a business outcome:

Technical Metric: Model hallucination rate dropped to 1%.

So What? Support staff spends less time correcting the AI.

Business Impact: We saved $2,000/week in labor costs while increasing accuracy.

Conclusion: The Future Belongs to the Measurable

The next winners won’t be the companies using AI — they’ll be the ones that can prove what AI delivered. Success in 2026 is about shifting your focus from "implementation" to "explanation." Stop letting your value stay invisible. Solve the AI attribution problem by making every decision measurable.

Leave a Comment